Automation - The Saviour?

So you want to automate your testing to solve all of your testing woes? Because automated tests will suddenly do the work of 200 testers in a few hours right?

Well not quite. There are many things to consider and understand if you really want to make the right decision on automation for your team. Investing in test automation has a cost and this cost can get higher if you don't really understand what you are getting into. That said test automation can bring a lot of value and the aim of anyone investing in test automation is to ensure the value you get is higher than the cost. This is by no means an exhaustive list and I don't profess to be an expert. This is however based on a lot of experience (both positive and negative) and the result of lots of dead ends and soul searching.

- The first thing I would encourage you to consider is what automated tests you want. Think about the how quickly you want your tests to give you pass/fail feedback. Unit tests and code based testing will give you the quickest feedback loop as they can be run every time code is checked in. In my experience I would encourage any team to have a target of getting as much of your automated testing into the code as possible. If you have a challenge doing this for any one of a million reasons then re-consider again and again looking at ways you can get your code into a suitable state to write code based tests against. If you can find a way of achieving this as early as possible you will save yourself a lot of pain.

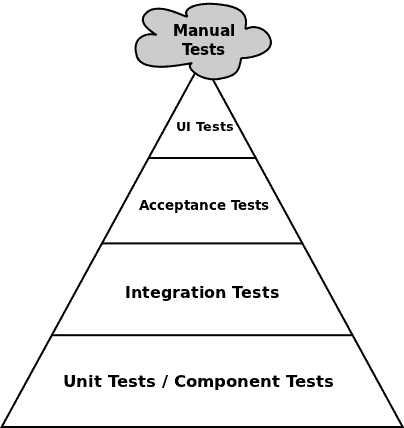

If you hit a brick wall and feel that an automated testing tool (non code based tests) are your only option for some of the testing (there can be a number of reasons for this such as state of code if it's been developed over a number of years or a lot of logic sitting in the UI layer) then look at whether your product has API's or endpoints you can hook into? If you can automate avoiding the UI then you should for the majority of your tests as you will avoid a lot of maintenance overhead when the product changes (which it inevitably will). Use the testing triangle below as a guide to how much of your testing should be covered where. In the below triangle the cost of testing goes up the higher up the triangle you go but the coverage is higher towards the bottom.

UI automation can still be valuable and can give a better user representation in some cases. If you are absolutely adamant you need UI automation, keep it to thin stripes through the product and avoid XY co-ordinates for actions, hopefully therefore ensuring minimal susceptibility to changes in the software.

Also consider what the tests are testing for. Automated testing might apply to performance and load and therefore you need to look at it from a slightly different angle. For load tests in particular you will generally be looking at specialised tools to build the load using agents/virtual users or similar. The question becomes more about how important these tests are for you. Will your software ever get really heavy load or will understand performance for a single user be enough.

Most importantly of all, just because you may not be writing code based automated tests, remember the golden rule about feedback and aim to make the testing feedback loop as quickly as possible.

Even in a UI automation world keep your tests as small as possible and run them as often as possible against a controlled and repeatable environment. Start from having them on a continuous loop (picking up the latest build and running again as soon as they finish) and work backwards. If having them running continuously isn't possible can they run twice a day, overnight and then as an absolute worst case scenario, weekly.

By running them as often as possible you are doing two things:

- Keeping the feedback loop of the tests as quick as possible

- Making the tests easier to maintain (if a valid change to the software causes the tests to break and hasn't been identified up front, a smaller time between running the tests gives you a smaller window of changes that could have broken the tests)

You need to understand that automated testing is really just automated checking. It will only check what you tell it to check and is only as good as the checks you identify. It isn't going to suddenly transform your testing if you aren't already able to find the best way of testing your product manually. I know many would disagree and would consider it waste however I think where possible you should only automate what you have already defined as manual tests, validating expected product behaviour. Having appropriate manual tests for all of your automated tests is a good reference point for what the test is trying to prove, particularly if you have different people picking up and putting down the tests for execution or maintenance. Let's be honest writing code blindly without fully understanding the users requirement is a bad idea whether that is for test or development purposes. Having clear behavioural tests will also help you to avoid automating tests against how the functionality is rather than against the expected behaviour. A test failing should get you to question the product not question the test therefore having confidence that the tests represent a customer's expectations/requirements is massively important if you want to avoid regular arguments about the tests or potentially even worse, people establishing apathy towards tests and just ignoring them.

Ownership of tests is a big consideration for anyone considering automated testing. Consider carefully how you work. Do you have or are you aiming for a continuous integration/continuous delivery model? If you are then consider how tests will fit into your delivery pipeline and therefore how long they will take to run. More importantly consider if the tests look to be broken who do you want to fix them, what their availability is and how long will it take. Working in Agile teams in a continuous integration/delivery environment you need the team to have the ability to identify and solve any problem that comes up. In a short iteration or in single piece flow/WIP limit practices you really don't want to be waiting on other teams to fix the tests or update the tests in line with changes made. All of that said, getting a team to take ownership of something written by a completely separate group without fully understanding the goals or having inputted into how it was written is a big challenge. Where you really want to be is that appropriate tests are part of the deliverable from a team giving them confidence and understanding of the tests and therefore increasing the value (or perceived value) of your tests in the delivery pipeline).

- But we barely have time to do the development work in the timescales without writing the automated tests as well!

If the tests (checks) are necessary then they need to be done somewhere before you can release the software to get any value out of the changes you are making. If you aren't automating them then you are testing them manually and if the area of the software is high impact and/or high risk then you are probably going to be changing and therefore testing this on a regular basis. That can add up to high cost of test (see the test triangle) to do basic checks that automation can do. It is also reducing the time your testers could spend working with the team and helping to build quality in or completing valuable 'testing' that automation can't perform. Maybe planning in time for test automation isn't such a high expense after all, especially if you are creating code based tests.

- But I'm a developer, it's not my job to write tests

I'm not even going to justify this with a response. It's your job to get software that is fit for purpose to customers.

There may still be reasons within your control or outside of your control that mean having automation created by a separate team is absolutely desirable/necessary. If this is the case it doesn't mean all ownership must be lost, it just means the teams need to work a little harder and be a little more disciplined on communication. Consider treating the automated tests as software in their own right. Get the team delivering the product/changes sitting down with those writing the tests to agree the deliverables, aim of the tests and approach to writing the tests. Maybe get regular touch points between the two groups to discuss progress and get sight of the automated tests being written allowing input and suggestions from both sides to be discussed. Look at implementing code reviews by the product development team over the automated tests being created on a regular basis. This will all increase the chances of the automated testing being co-owned by the two teams and increase the pool of people able and willing to maintain the tests.

- Understanding the tooling options and what best fits your needs is vital. Failure to do due diligence on this and really understand the previous points before committing to a given tool and approach can send you steaming down a route that eventually hits a wall or needs to change which then leaves you with large numbers of assets (created at a large cost). These assets either become unusable or need extra effort to transfer. Alternatively you could find yourself with high licensing costs for software that sits on the shelf because you can't find a way of introducing it without breaking delivery.

Assuming you have given careful consideration to all of the previous points and have;

- decided how much testing you can do in the code (based on how you are developing software or want to develop software),

- ensured you are clear on how you are going to provide traceability/understanding of tests you want to automate

- have a clear view of who will own, maintain and execute the tests,

then you need to find a tool that fits your need.

There will always be pro's and con's to any tool you decide to use so don't go looking for perfection or you are likely to end up disappointing. Below are a few categories and examples to consider:

Code based test automation

More frameworks than tools, to allow unit tests built into the code to be executed and the results presented back. Building unit tests into your development activity is a highly (relatively) technical skillset and an understanding of how to pass inputs in and assess outputs through the code base is needed. Stubs, object mocks and test harnesses are useful options to help control the test inputs and outputs. Getting value out of unit testing (as opposed to just having tests in the code for the sake of it) is something often missed.

Test Driven Development and Behavioural Driven Development are test first methodologies aimed at identifying tests up front, writing the tests which initially break/fail and then developing the solution to ensure the tests pass. As already identified code based tests have the value of minimal overhead (you are already developing the product so adding extra code to test the product up front is a much cheaper overhead than trying to write tests after the fact), high coverage and fast feedback (tests being run on every check in of code).

Common test frameworks include:

Arguably the most common framework for code based testing is the x-unit family (N-Unit, J-Unit, Fs-Unit etc.) although alternative frameworks are becoming more common, for example those delving into the world of the MEAN Stack are likely to be picking up Jasmine or you may be using/want to look at something like TestNG.

API/Integration test automation

The aim of integration tests is to orchestrate integration of different components of the software and ensure they all hang together effectively. If your software/web service has a strong service layer you can benefit from testing this through the service layer and interacting with the API rather than relying on the User Interface. Integration tests will generally rely on completion and build of a component as opposed to executing on check in therefore the feedback loop will be longer than code based tests however the improved stability due to not needing to rely on the User Interface and the speed of execution due to interacting with the service layer (no dependency on screen loads, or object states) means integration tests are much more suited to a continual integration/delivery environment than ui automation and depending on the service layer available in your software can potentially give you as much confidence of the End to End stability.

Essentially Integration tools can be anything from the x-unit or TestNG frameworks to more dedicated testing tools such as LDRA, Citrus or Robot framework. Even tools like Selenium (whilst not ideal) can be setup to test against the api but need to be hooked into a framework such as TestNG or Robot.

UI test automation

You may have already noticed my reluctance to use UI automation however I'd be lying if I said I hadn't previously made the decision to go down the UI automation route. UI automation essentially interacts with the objects in the UI and provides the most realistic user representation testing but suffers from flakiness associated with object recognition, waiting on objects, return values or task completion. Understanding the flaws (already discussed) in relation to ownership, regular test execution, size of the tests and control over the environment and data can help you derive value from UI automation testing especially if you have software with a limited/non-existent service layer or a large amount of business logic in the UI. Also automation ID's can be put into the UI layer to help with interaction but whilst they help don't expect them to be a silver bullet.

UI Automation test tooling has a variety of options for all budgets if you have a web based product. These include open source tooling such as selenium, Robot and Watir to proprietary solutions with a higher cost such as Ranorex, Test Complete (Smartbear) or HP's unified functional tester (formerly Quick Test Professional). If you are testing a web product and decide you need to test against the UI then I would be surprised if you can find many reasons to pay for proprietary testing software as opposed to making Selenium work for you.

If you are working against a desktop application then you are a little more restricted. The proprietary options are pretty much similar with HP UFT, Test Complete and Egg Plant leading the way and providing broadly similar capability but varying price points. Robot Framework (with the autoit library) is worth consideration from the open source pot or alternatively if you are a Microsoft house with .net framework based products you may have a couple of options dependant on your licencing. CodedUI has the benefit of feeding into the Microsoft performance test capabilities. TestStack White should also be considered if you are working with .net framework and can also be used in conjunction with CodedUI as it is built on top of Microsoft's UIAutomation library. I have found this to be an option that helps with the ownership consideration and receives a much better reception if you want developers to be part of the UI test automation solution.

What Now?

As stated previously this is not meant as a guide to follow, these are just some of my own thoughts. You will come across a broad spectrum of opinions on use of automated testing and I would suggest anyone looking to get value out of automation does their own research and considers a variety of information. So many factors will influence what test automation is best suited to you, your team, your product and your situation and at the end of the day only you understand those factors fully, Don't go into test automation half-heartedly. Be prepared to invest continually into making it a success, adapt to challenges you encounter and getting everyone on board with building it into your planning and delivery. Encourage a mindset that if a test is broken you either fix the test or the code but never ignore it. Don't be afraid to find ways to change and improve to get the most out of test automation but build your test automation on the foundations of quickest possible test feedback, testing early and testing often.